How do I enumerate more root domain names than others?

Hi, it's me again Inderjeet and today I will talk about how I collect root domain names. This article is about ~1000 words but I wrote it feeling like I am hacking right now.

Root Domains

I always make sure that I have more root domain names than anyone. I am not talking about subdomains here, that is the next part. The first part is to collect as many root domain names as possible. To collect root domain names, I use various techniques:

- Collect root domain names from crt.sh

2. Collect root domain names from Google, or other search engines.

3. ASN/IP addresses, reverse IP lookup

4. JS files

5. Redirections

6. Shodan

crt.sh

It is important that you utilise everything out of a tool or service to get more results. You can enter certificate fingerprints or domain name or organization name in the search input.

Copy and paste all the root domain names from the results to a text file then.

Better way:

wget 'https://crt.sh/?q=Paytm+Payments&output=json' -O crtsh.txt ;

cat crtsh.txt | jq | grep common_name | cut -d':' -f2 | cut -d'"' -f2 | sort -u | tee -a domains.txt

Well, this one I learned from @jhaddix (Jason Haddix). Use dorks on Google to see more results from the sites which you are hacking:

intext:"Tesla © 2022"

Using these copyright footers present on the bottom of websites, we can get more domain names. Tweak with it a little make it 2021, or 2020.

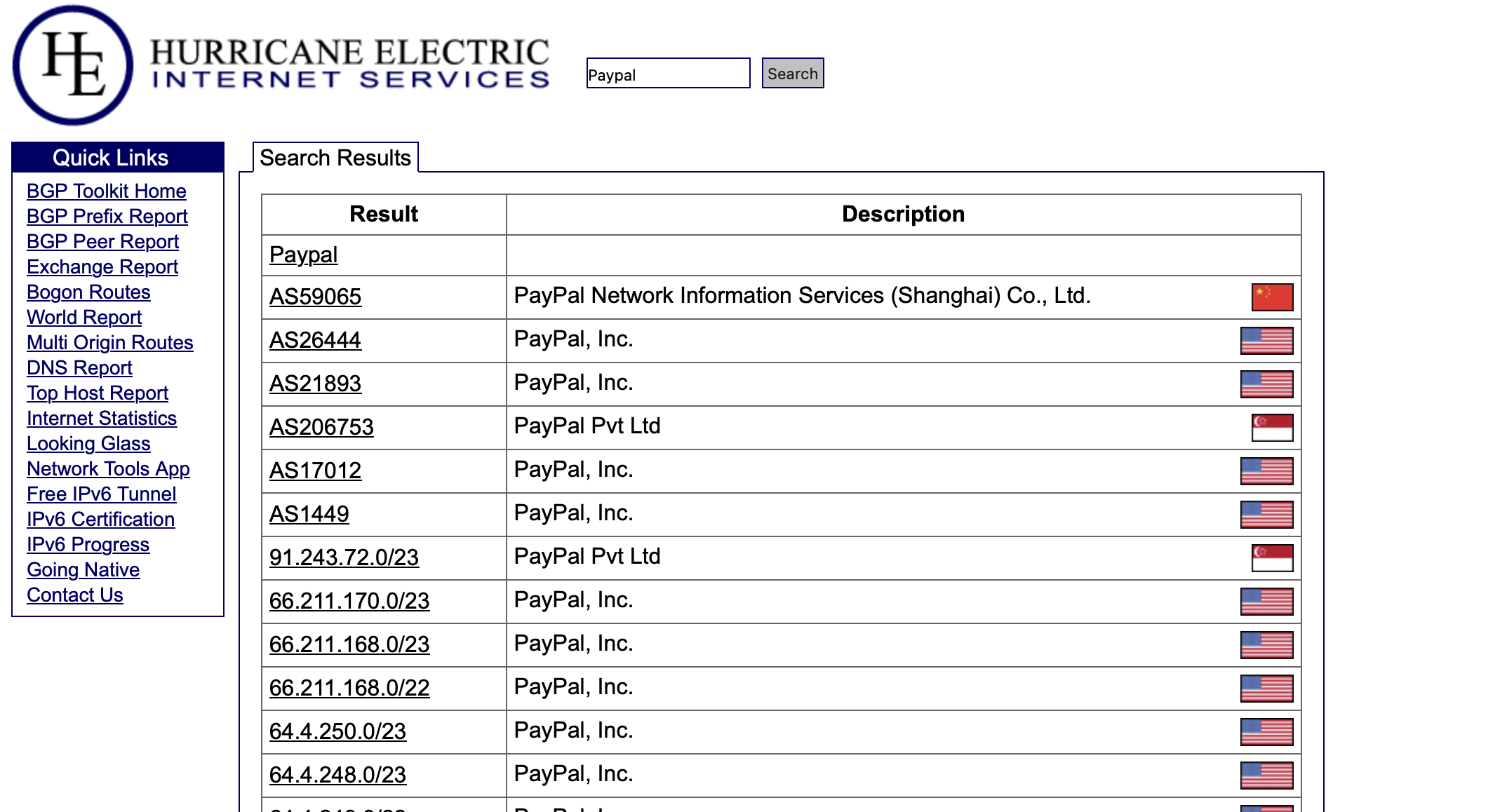

ASN and Reverse IP Lookup

This is the most interesting and it's a goldmine with organizations who own IP spaces. Organizations like Yahoo, Snapchat, Google, Facebook have their own ASN or reservered IP spaces. Well, these ASN numbers are public, and I find all the IP ranges and then use massscan to scan them all for common HTTP ports like port 80,443,8080,8081,3306,7443,6443,8443,etc.

This strategy does not apply to all the targets but use it when you are hacking on a bigger organization who have an ASN.

Use bgp.he.net to find ASNs of an organization

Now, you can use masscan to find open ports on these IP ranges. And, try to scan with a connect scan flag(-sT) and stealth scan (-sS) flag both. By both means both, in most cases, you will get fewer results with -sS, but more results with -sT.

masscan -iL ranges.txt -p 80,443,7443,8443,8080,8081,3306 -sT

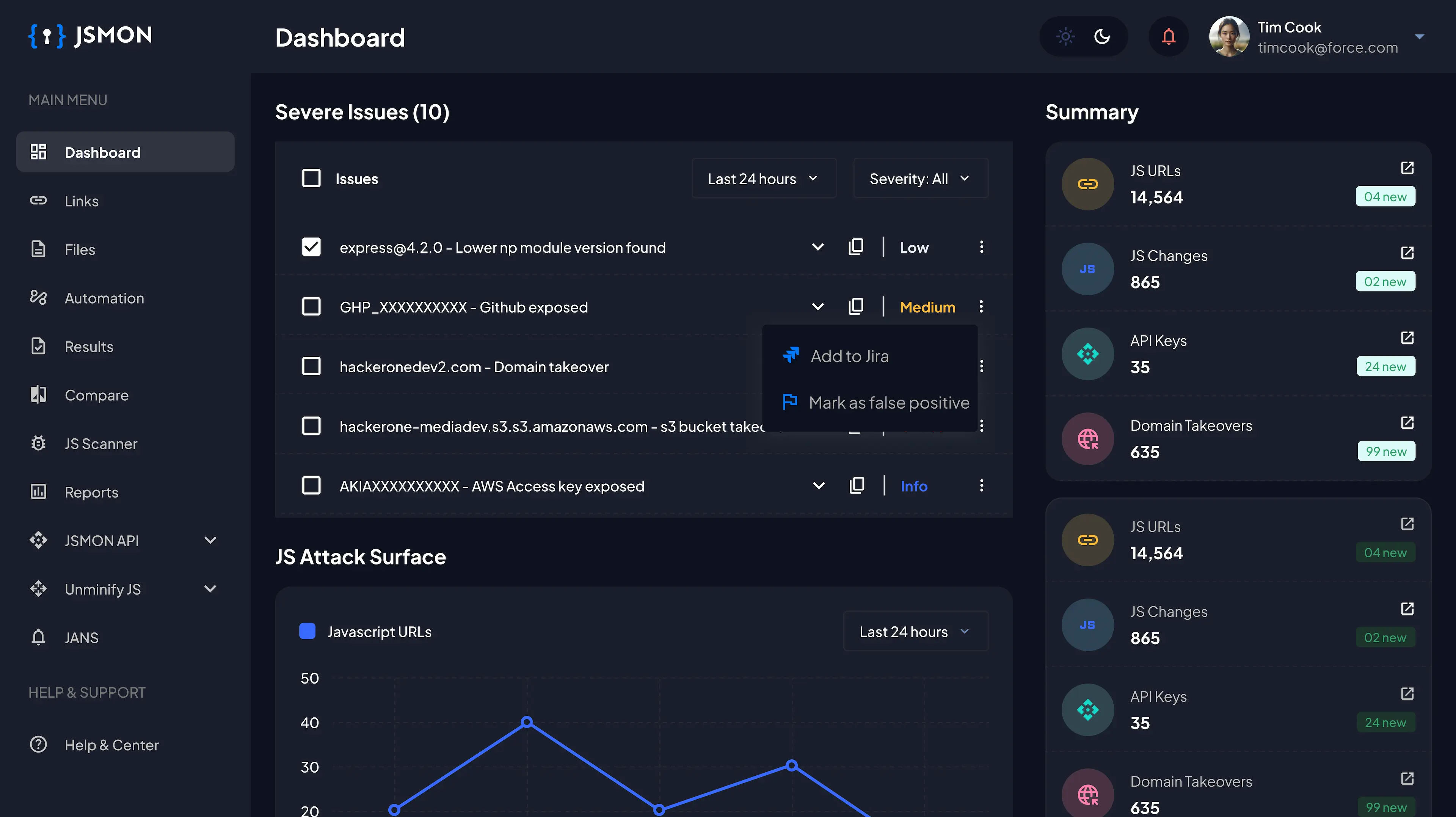

Javascript Files and Source Code

Ahaa, JS files are juicy source of data if extracted carefully. Client side JS files contains endpoints, domain names, API keys, secrets, etc.

Also do a right click and view source code: developers hide secrets in source code sometimes via commenting and hidden attribute.

Install LinkFinder from github and pass the javascript files to this tool to find some links.

python3 LinkFinder.py -i https://redacted.com/app.js -o cli/html

Install JSMiner Extension and JSLinkFinder extensions on Burpsuite.

These extensions crawl the js files while your traffic is going through Burpsuite in the background. Results are visible in the dashboard. Believe me, these extensions finds so juicy results.

Go through these files once manually, I browse through all the JS files in Sublime Text Editor and my eyes always finds more endpoints and URLs then extensions and tools. Well don't look on CDN js files and libraries javascript, look for index.js, main.js, app.js or 1.js, or 2.js like files.

Use wayback to find how these js files look in the past.

Redirections and Manual Browsing

I don't know if this have occured with you or not, but it've happened with me many times. I am visiting example.com and but server will give a 301 Redirect to example.io. Well, these are two different root domain names.

Domain names which you are not visiting because their status code is 301 or 302 are gold mines.

Whenever I find something like this, I check the whois of the domain name and add it to the domains.txt file if Registrant Organization or email matches.

Case Study:

About one month ago, I was hacking on a bigger organization it has a domain like admin-verification.rashabase.com (example) and when I visited this domain name, it redirected me to another domain case1.casestudy.com. This app requires VPN to connect. I thought casestudy.com is a thirdparty vendor.

I did a whois lookup on this casestudy.com domain name and found that Registrar Organization is the same. I added this root domain name (casestudy.com) to my domains.txt, ran my subdomain enumeration script again and boom!!. I have so many subdomains now, most of which don't require a company VPN.

Shodan

Ahaa, shodan is a factory of secrets when someone is about talking Internet assets. I have already posted a free article on my Favourite Top 10 Shodan Dorks.

Here, we are talking about root domain names. So, you can use these dorks to find more root domain names.

ssl:"Organization-Name"

ssl.cert.subject.cn:redacted.com

ssl.cert.issuer.cn:apple.com

Also, use shodan pip module. You can use shodan in command line by giving your API key and then run the commands by shodan search command. Pipe the results out to a file, do cutting and sorting using cut and sort commands. Boom!! more results.

Conclusion

Use each technique carefully and think about when to use it and when not to. The same technique is not usable for every girl or boy, right? After collecting all the root domain names, now it's time to run your subdomain enumeration tools by loading API keys. What are you waiting for? Go on and hunt now.

Happy Hacking!!